检查点

Enable the Document AI API

/ 10

Create a processor

/ 20

Authenticate API requests

/ 30

Make a batch processing request

/ 20

Make a batch processing request for a directory

/ 20

Optical Character Recognition (OCR) with Document AI (Python)

- GSP1138

- Overview

- Objectives

- Setup and requirements

- Task 1. Enable the Document AI API

- Task 2. Create and test a processor

- Task 3. Authenticate API requests

- Task 4. Install the client library

- Task 5. Make an online processing request

- Task 6. Make a batch processing request

- Task 7. Make a batch processing request for a directory

- Congratulations!

GSP1138

Overview

Document AI is a document understanding solution that takes unstructured data (e.g. documents, emails, invoices, forms, etc.) and makes the data easier to understand, analyze, and consume. The API provides structure through content classification, entity extraction, advanced searching, and more.

In this lab, you will perform Optical Character Recognition (OCR) of PDF documents using Document AI and Python. You will explore how to make both Online (Synchronous) and Batch (Asynchronous) process requests.

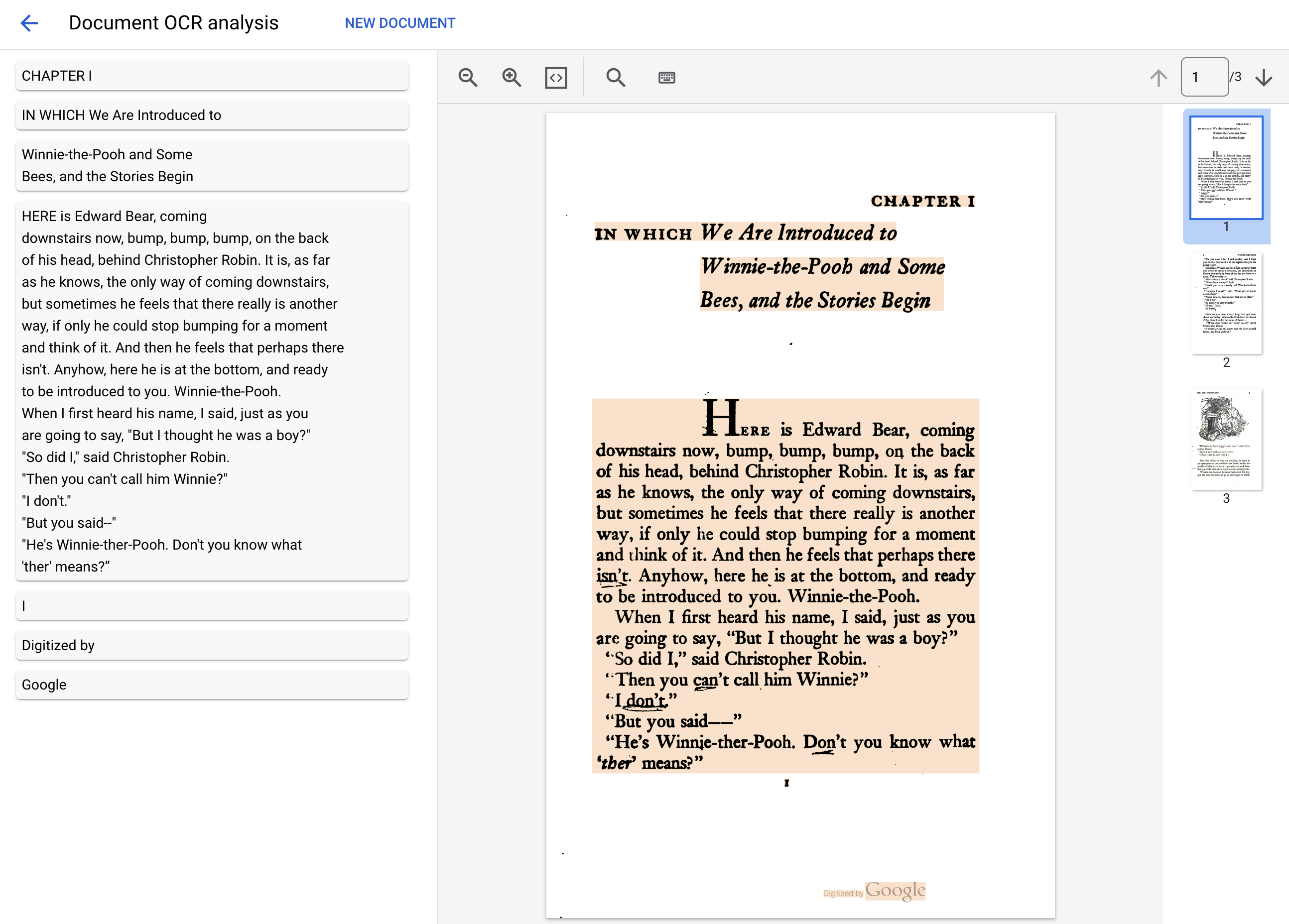

We will utilize a PDF file of the classic novel "Winnie the Pooh" by A.A. Milne, which has recently become part of the Public Domain in the United States. This file was scanned and digitized by Google Books.

Objectives

In this lab, you will learn how to perform the following tasks:

- Enable the Document AI API

- Authenticate API requests

- Install the client library for Python

- Use the online and batch processing APIs

- Parse text from a PDF file

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab---remember, once you start, you cannot pause a lab.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a pop-up opens for you to select your payment method. On the left is the Lab Details panel with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account. -

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}} You can also find the Username in the Lab Details panel.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}} You can also find the Password in the Lab Details panel.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials. Note: Using your own Google Cloud account for this lab may incur extra charges. -

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Activate Cloud Shell

Cloud Shell is a virtual machine that is loaded with development tools. It offers a persistent 5GB home directory and runs on the Google Cloud. Cloud Shell provides command-line access to your Google Cloud resources.

- Click Activate Cloud Shell

at the top of the Google Cloud console.

When you are connected, you are already authenticated, and the project is set to your Project_ID,

gcloud is the command-line tool for Google Cloud. It comes pre-installed on Cloud Shell and supports tab-completion.

- (Optional) You can list the active account name with this command:

- Click Authorize.

Output:

- (Optional) You can list the project ID with this command:

Output:

gcloud, in Google Cloud, refer to the gcloud CLI overview guide.

Task 1. Enable the Document AI API

Before you can begin using Document AI, you must enable the API.

- Using the Search Bar at the top of the console, search for "Document AI API", then click Enable to use the API in your Google Cloud project.

-

Use the search bar to search for "Cloud Storage API", if not already enabled, click Enable.

-

Alternatively, the APIs can be enabled using the following

gcloudcommands.

You should see something like this:

Now, you can use Document AI!

Click Check my progress to verify the objective.

Task 2. Create and test a processor

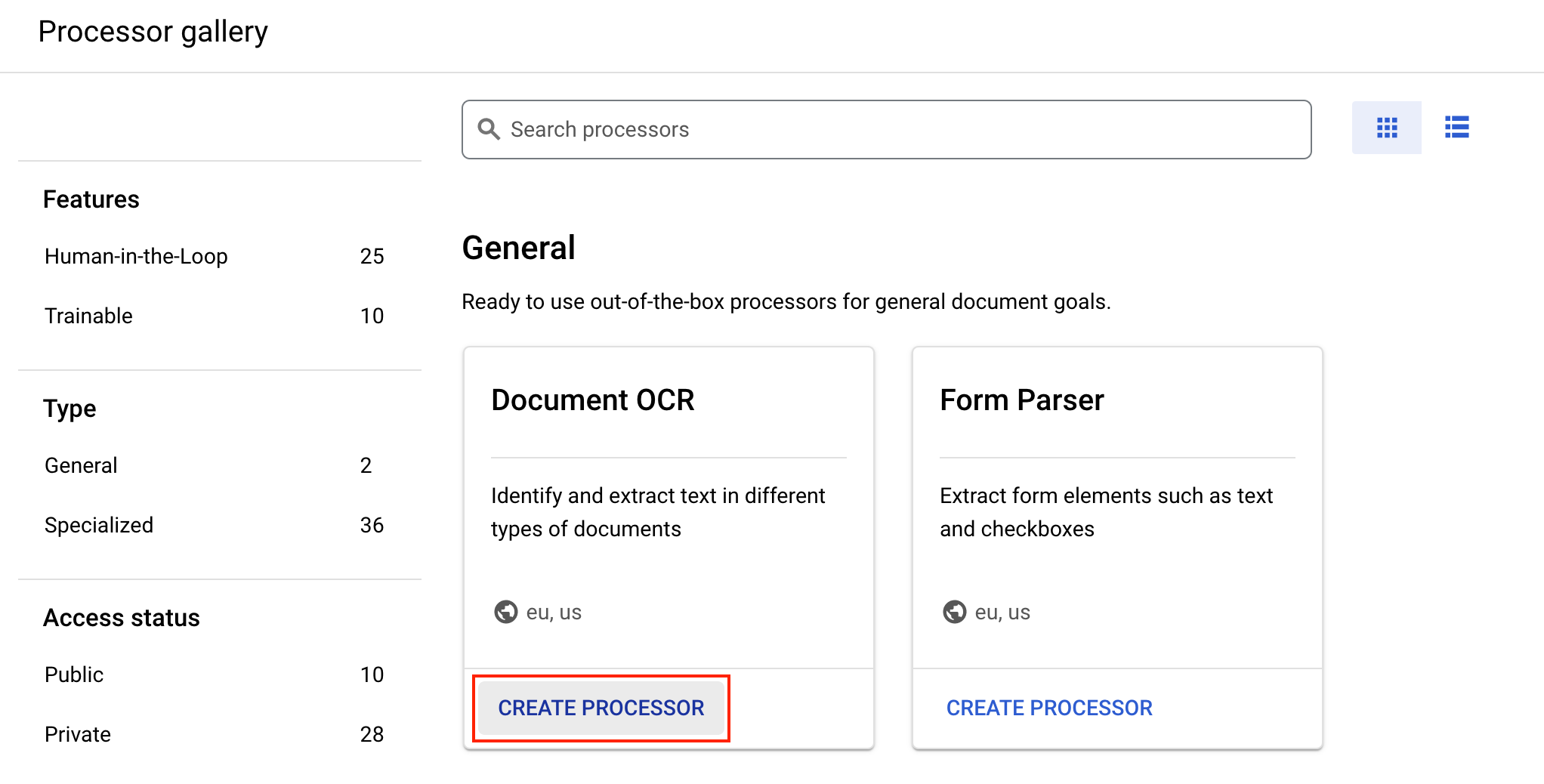

You must first create an instance of the Document OCR processor that will perform the extraction. This can be completed using the Cloud Console or the Processor Management API.

- From the Navigation Menu, under Artificial Intelligence, select Document AI.

- Click Explore Processors, and inside Document OCR, click Create Processor.

-

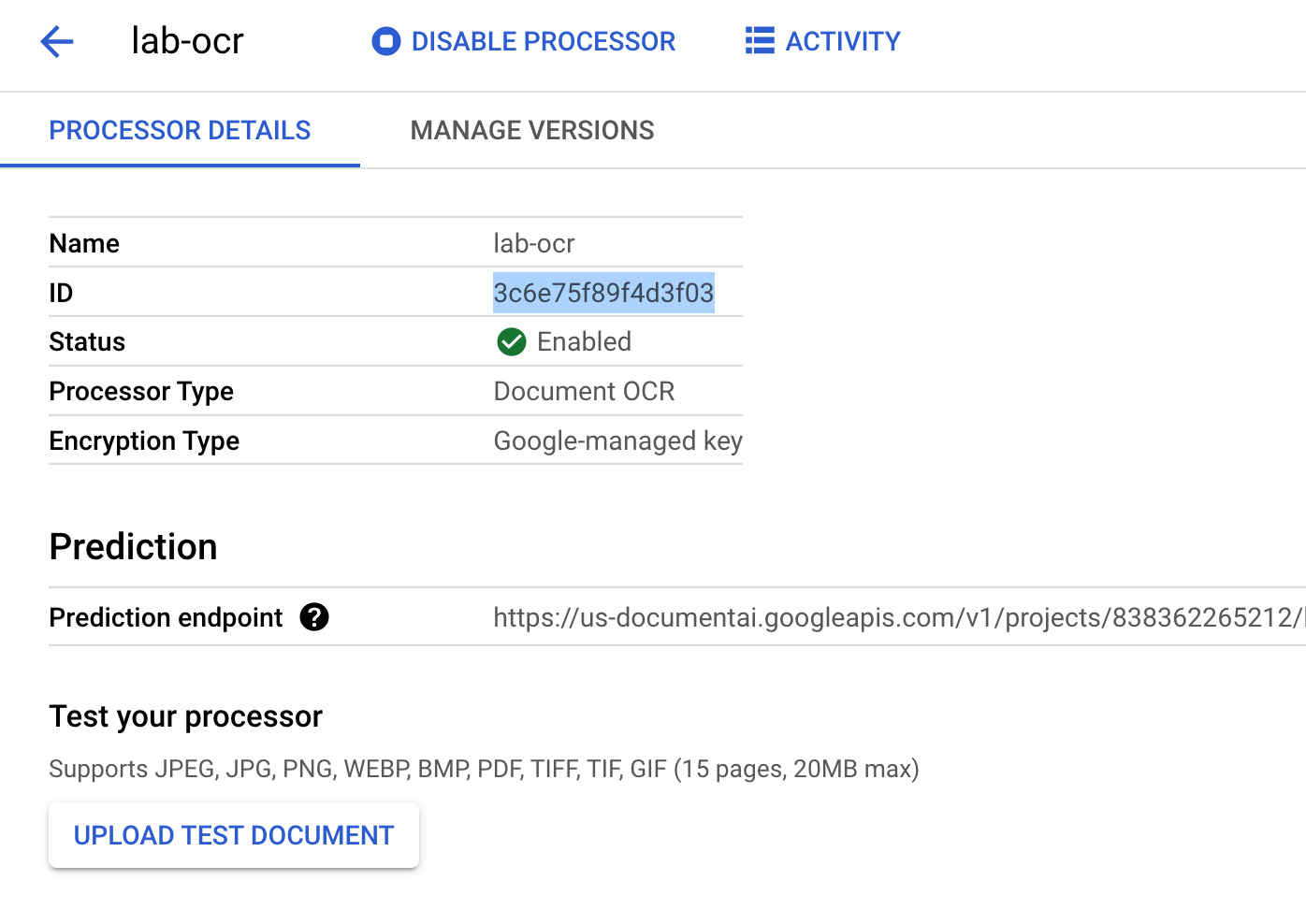

Give it the name

lab-ocrand select the closest region on the list. -

Click Create to create your processor

-

Copy your Processor ID. You must use this in your code later.

- Download the PDF file below, which contains the first 3 pages of the novel "Winnie the Pooh" by A.A. Milne.

Now you can test out your processor in the console by uploading a document.

- Click Upload Test Document and select the PDF file you downloaded.

Your output should look this:

Click Check my progress to verify the objective.

Task 3. Authenticate API requests

In order to make requests to the Document AI API, you must use a Service Account. A Service Account belongs to your project and it is used by the Python Client library to make API requests. Like any other user account, a service account is represented by an email address. In this section, you will use the Cloud SDK to create a service account and then create credentials you need to authenticate as the service account.

- First, open a new Cloud Shell window and set an environment variable with your Project ID by running the following command:

- Next, create a new service account to access the Document AI API by using:

- Give your service account permissions to access Document AI, Cloud Storage, and Service Usage by using the following commands:

- Create credentials that your Python code uses to login as your new service account. Create these credentials and save it as a JSON file

~/key.jsonby using the following command:

- Finally, set the

GOOGLE_APPLICATION_CREDENTIALSenvironment variable, which is used by the library to find your credentials. The environment variable should be set to the full path of the credentials JSON file you created, by using:

To read more about this form of authentication, see the guide.

Click Check my progress to verify the objective.

Task 4. Install the client library

- In Cloud Shell, run the following commands to install the Python client libraries for Document AI and Cloud Storage.

You should see something like this:

Now, you're ready to use the Document AI API!

Upload the sample PDF to Cloud Shell

-

From the Cloud Shell toolbar, click the three dots and select Upload.

-

Select File > Choose Files and select the 3-page PDF file you downloaded earlier.

-

Click Upload.

-

Alternatively, you can also download the PDF from a public Google Cloud Storage Bucket using

gcloud storage cp.

Task 5. Make an online processing request

In this step, you'll process the first 3 pages of the novel using the online processing (synchronous) API. This method is best suited for smaller documents that are stored locally. Check out the full processor list for the maximum pages and file size for each processor type.

- In Cloud Shell, create a file called

online_processing.pyand paste the following code into it:

- Replace

YOUR_PROJECT_ID,YOUR_PROJECT_LOCATION,YOUR_PROCESSOR_ID, and theFILE_PATHwith appropriate values for your environment.

FILE_PATH is the name of the file you uploaded to Cloud Shell in the previous step. If you didn't rename the file, it should be Winnie_the_Pooh_3_Pages.pdf which is the default value and doesn't need to be changed.

- Run the code, which will extract the text and print it to the console.

You should see the following output:

Task 6. Make a batch processing request

Now, suppose that you want to read in the text from the entire novel.

- Online Processing has limits on the number of pages and file size that can be sent and it only allows for one document file per API call.

- Batch Processing allows for processing of larger/multiple files in an asynchronous method.

In this section, you will process the entire "Winnie the Pooh" novel with the Document AI Batch Processing API and output the text into a Google Cloud Storage Bucket.

Batch processing uses Long Running Operations to manage requests in an asynchronous manner, so we have to make the request and retrieve the output in a different manner than online processing.

However, the output will be in the same Document object format whether using online or batch processing.

This section shows how to provide specific documents for Document AI to process. A later section will show how to process an entire directory of documents.

Upload PDF to Cloud Storage

The batch_process_documents() method currently accepts files from Google Cloud Storage. You can reference documentai_v1.types.BatchProcessRequest for more information on the object structure.

- Run the following command to create a Google Cloud Storage Bucket to store the PDF file and upload the PDF file to the bucket:

Using the batch_process_documents() method

- Create a file called

batch_processing.pyand paste in the following code:

-

Replace

YOUR_PROJECT_ID,YOUR_PROJECT_LOCATION,YOUR_PROCESSOR_ID,GCS_INPUT_URIandGCS_OUTPUT_URIwith the appropriate values for your environment.- For

GCS_INPUT_URI, use the URI of the file you uploaded to your bucket in the previous step, i.egs://./Winnie_the_Pooh.pdf - For

GCS_OUTPUT_URI, use the URI of the bucket you created in the previous step, i.egs://.

- For

-

Run the code, and you should see the full novel text extracted and printed in your console.

Your output should look something like this:

Great! You have now successfully extracted text from a PDF file using the Document AI Batch Processing API.

Click Check my progress to verify the objective.

Task 7. Make a batch processing request for a directory

Sometimes, you may want to process an entire directory of documents, without listing each document individually. The batch_process_documents() method supports input of a list of specific documents or a directory path.

In this section, you will learn how to process a full directory of document files. Most of the code is the same as the previous step, the only difference is the GCS URI sent with the BatchProcessRequest.

- Run the following command to copy the sample directory (which contains multiple pages of the novel in separate files) to your Cloud Storage bucket.

You can read the files directly or copy them into your own Cloud Storage bucket.

- Create a file called

batch_processing_directory.pypaste in the following code:

-

Replace the

PROJECT_ID,LOCATION,PROCESSOR_ID,GCS_INPUT_PREFIXandGCS_OUTPUT_URIwith the appropriate values for your environment.- For

GCS_INPUT_PREFIX, use the URI of the directory you uploaded to your bucket in the previous section, i.egs://./multi-document - For

GCS_OUTPUT_URI, use the URI of the bucket you created in the previous section, i.egs://.

- For

-

Use the following command to run the code, and you should see the extracted text from all of the document files in the Cloud Storage directory.

Your output should look something like this:

Great! You've successfully used the Document AI Python Client Library to process a directory of documents using a Document AI Processor, and output the results to Cloud Storage.

Click Check my progress to verify the objective.

Congratulations!

Congratulations! In this lab, you learned how to use the Document AI Python Client Library to process a directory of documents using a Document AI Processor, and output the results to Cloud Storage. You also learned how to authenticate API requests using a service account key file, install the Document AI Python Client Library, and use the Online (Synchronous) and Batch (Asynchronous) APIs to process requests.

Next steps/Learn more

Check out the following resources to learn more about Document AI and the Python Client Library:

- The Future of Documents - YouTube Playlist

- Document AI Documentation

- Document AI Python Client Library

- Document AI Samples Repository

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated: October 17, 2023

Lab Last Tested: October 17, 2023

Copyright 2024 Google LLC All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.